Evaltex

From LRDE

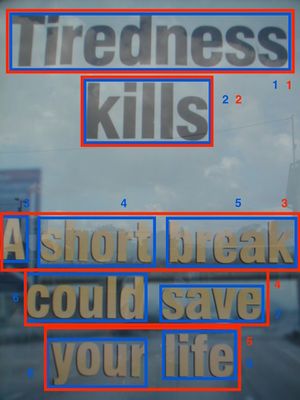

EvaLTex (Evaluating Text Localization) is a unified evaluation framework used to measure the performance of text detection and text segmentation algorithms. It takes as input text objects represented either by rectangle coordinates or by irregular masks. The output consists of a set of scores, at local and global levels, and a visual representation of the behavior of the analysed algorithm through quality histograms.

For more details on the evaluation protocol, read the scientific paper published in the Image and Vision Computing Journal and the Ph.D Thesis. Details on the visual representation of the evaluation can be found in the article published in the Proc. of International Conference in Document Analysis and Recognition. To use the protocol for segmentation purposes please check out the article published in the Proc. of International Workshop on Robust Reading.

Please cite the following papers in all publications that use EvaLTex:

|

Evaluation performance measurements

Local evaluation

For each matched GT object by a detection we assign two quality measures: Coverage (Cov) and Accuracy (Acc);

- Cov computes the rate of the matched area with respect to the GT object area

- Acc computes the rate of the matched area with respect to the detection area

The two quality metrics are adapted based on the type of matching (one-to-one, one-to-many, many-to-one or many-to-many). For more details please refer to the scientific paper published in the Image and Vision Computing Journal and the details in Chapter 3 of this Ph.D Thesis.

Global evaluation

The global evaluation consists of a set of measurements: a global recall , a quantitative recall , a qualitative recall , a "global" precision , a "quantitative" precision , a "qualitative" precision , a split metric as well as an overall F-Score value. In addition, the tool provides two histogram representations of the local qualities and a derived set of metrics ( and ) computed using histogram distances. For the comprehension of all these metrics, we define the following:

- = nb. of GT objects in the image/dataset

- = nb. of true positives (GT objects that were detected)

- = nb. false positives (detections with no correspondence in the GT)

Recall. The Recall () computes the amount of detected text and is defined as the product of two terms:

The left term of the product represents the ratio between the number of true positives and the total number of GT objects. We interpret this ratio as the quantity Recall , as it accurately describes the percentage of detected GT objects, regardless of their coverage:

The second term is get by averaging all coverage rates of the detected GT objects. Intuitively, we can denote this proportion as the quality Recall, , as it characterizes the mean of covered surface of the GT:

Precision. By applying the same reasoning, we obtain the following decomposition of the global Precision :

Here again, the left term of the product provides an insight on the percentage of detections that have a correspondence in the GT. Consequently, we call this measure the quantity precision :

Inversely, the right term computes the accuracy average obtained from the matching of the detection set and the GT. This ratio will then be referred to as the Precision quality :

Split. The Split metric evaluates the level of GT fragmentation in a dataset and is computed as:

, where =nb. of detections matching

The Split measure can be used as an individual metric or integrated in the Recall computation. For more details please refer to the scientific paper published in the Image and Vision Computing Journal and the details in Chapter 3 of this Ph.D Thesis.

F-Score. We use as an overall metric the well known F-Score defined as:

Quality histograms.

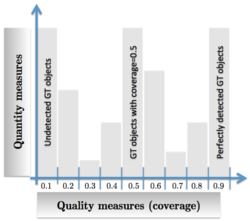

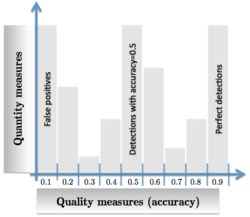

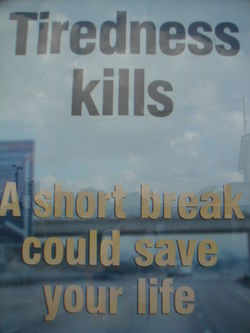

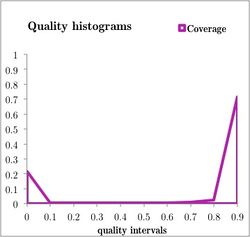

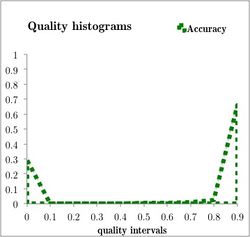

Histograms can be seen as convenient tools to represent simultaneously the quality and quantity aspects of a set of detections: the quality aspect can be described by the histogram's bin (each bin corresponds to a coverage or accuracy interval); the detection quantity feature can be represented by the bin values (for example, the bin value counts how many GT objects have a coverage or accuracy value that belongs to that bin's interval). The coverage and accuracy histograms intuitively provide at a glance different properties of the detection (or segmentation) behaviour, as illustrated in the following figures:

EMD metrics. As an alternative to the global score set explained above, the tool also provides a recall and precision value obtained by applying the Earth Mover's Distance between the coverage (), respectively the accuracy () histogram and an optimal histogram (), which describes a perfect detection.

For more details on the histogram representation and the EMD metrics please refer to the scientific paper published in the International Conference on Document Analysis and Recognition and the details in Chapter 4 of this Ph.D Thesis.

Input format

To simplify the use of the EvaLTex tool for both detection and segmentation tasks, we unified the input format. Hence, to evaluate both text detection and text segmentation we use the same input format consisting of .txt files which contain different attributes of each text object (i.e. coordinates of the bounding boxes).

Text detection tasks

Text detection results (i.e. word, lines, regions) can be represented both through boxes and masks. For text detection tasks using bounding boxes, a .txt file is enough. If the text objects are represented by irregular masks, then an additional labeled image will be needed.

GT format. The GT necessary for the text detection (and also segmentation tasks) is represented by the following format:

- img name

- image height, image width

- text object list (one per line) with the following attributes:

- ID: unique text object ID

- region ID: region ID to which the object belongs to

- "transcription": can be empty

- text reject: option that decides if a text object should be counted or not; can be set to f (default) or t (not take into account)

- x: x coordinate of the bounding box

- y: y coordinate of the bounding box

- width: width of the bounding box

- height: height of the bounding box

e.g.: img_1.txt

| img_1 960,1280 |

Detection format. The detection .txt file format differs slightly from the GT one: it does not contain the image size, the region ID and the text reject attributes. Hence, the detection file has the following format:

- img name

- text object list (one per line) with the following attributes:

- ID: unique text object ID

- "transcription": can be empty

- x: x coordinate of the bounding box

- y: y coordinate of the bounding box

- width: width of the bounding box

- height: height of the bounding box

e.g.: img_1.txt

| img_1 1,"",272,264,392,186 |

Text mask representation

The interest of using masks rather than rectangles is to represent text strings, not only in horizontal or vertical configurations, but also tilted, circular, curved or in perspective. In such cases, the rectangular representation might disturb the matching process: a detection can involuntary match a GT object due to its varying direction (inclined, curved, circular). To evaluate mask detection objects, we need, in the addition of the file format explained before, a set of labeled images, for both the GT and the detection set.

The only difference between the GT format of the text box representation and the text mask representation consists in the region ID. The irregular mask annotation disables the use of the region tag. When dealing with rectangular boxes, the regions are generated automatically based on the coordinates of the GT objects. Consequently, a region is the bounding box of several "smaller" boxes. Thus, when masks are annotated irregularly, regions cannot be generated automatically, so each GT object will have a different region ID. One can simplify this by attributing the same ID to the object and the region.

example

Text segmentation tasks

Evaluating text segmentation tasks is very similar to evaluating text detection using a mask representation. For text segmentation we use a mask for each character, contrary to text detection when we use masks to represent words, lines or regions.

Text segmentation GT format. Similar to text detection tasks using masks, to evaluate text segmentation we use, in addition to the .txt file a labeled image (each character is labeled differently). Each GT object is represented by a character. Character-level GT objects cannot be grouped into regions and consequently each text object has a different region tag. The x, y, width and height will define the coordinates of the bounding box of each character.

e.g.: img_1.txt

| img_1 960,1280 |

Notice that the rectangular mask shape in the segmentation GT example depicts a text object that should not be considered. This corresponds to setting the reject option to t for the text mask having the ID 16, as seen above.

Text segmentation result format. The result format consists in the same labeled image as the one used for the GT and the detection .txt file containing the positions of the bounding boxes of each segmented connected component.

e.g.: img_1.txt

| img_1 1,"",383,42,103,167 |

Output format

The evaluation results are given as .txt files, in two forms: a file with the results obtained on the entire dataset and a file with results generated for each image in the dataset. The difference between the local evaluation and the global one consists in the statistics (Cov, Acc and split) for each GT object in an image.

Global evaluation for an entire dataset

|

EvaLTex statistics |

EvaLTex statistics summarize the number of GT objects, detections, false positives and true positives in the dataset.

|

Global results FScore_noSplit=0.725095 |

The global scores are the default Recall score (with integrated Split), the Recall with no integrated Split, the Precision, as well as the default FScore (with integrated Split) and the FScore without the integrated Split.

|

Quantity results |

Quantity results only refer to the number of detected text objects or the number of detections with a match in the GT regardless of the coverage or accuracy areas.

|

Quality results |

The quality results contain two histograms, representing the coverage and accuracy distributions over the dataset. The histogram format produced by EvaLTex is given as a n-size array, where n is the chosen number of bins. In order to generate the quality histograms, any visualization tool can be used. e.g.

|

EMD results |

As an alternative to the global scores, we can also compute, using the EMD distance two quality scores based on the Coverage and Accuracy histograms.

Local evaluation .txt file for each image

The local evaluation file, generated for each image of the dataset, has the same format as the dataset evaluation result file.

|

EvaLTex statistics - image img_1 Global results FScore_noSplit=0.572144 Quantity results Quality results EMD results FScore_noSplit=0.578853 |

In addition, it contains the accuracy, coverage and split values for all the GT objects in the dataset.

|

Local evaluation |

Run the evaluation

The executable (EvaLTex) takes as input two .txt files, one for the ground truth and one for the detection/segmentation.

Usage:

./EvaLTex gt.txt det.txt res_dir [-a] [gtImgDir detImgDir]

|

The configuration file(evaltex.ini) contains parameter values needed for the evaluation process. The file should be placed in the same repository as the executable.

Structure of evaltex.ini:

- region boolean that decides whether to use or no the region option (default region=true)

- print_level sets the level of output details (default print_level=0)"

- min_area threshold for the minimum area acceptance between a GT and a detection (default min_area=0)

- hist_bin_nb number of bins used to generate the quality histograms and EMD scores (default hist_bin_nb=0)

- split boolean that decides whether to integrate the split into the coverage (default split=true)

- det_border border variation in terms of percentages for text detection (default det_border=0.01)

Resources

Evaluation Datasets

Text detection

- ICDAR 2013 & 2015 Natural scene: ground truth .txt

Text segmentation

- ICDAR 2013 & 2015 Natural scene: ground truth .txt & labeled images

- ICDAR 2013 & 2015 Born-digital: ground truth .txt & labeled images

Download: Executable and config file evaltex.tar.gz

Dependencies: libTIFF and GraphicsMagick

Example: example.tar.gz containing a ground truth and a detection file

Credits

This work is part of the LINX project and was partially supported by FUI (Fond Unique Interministeriel) 14.

EvaLTex was written by Ana Stefania CALARASANU. Please send any suggestions, comments or bug reports to calarasanu@lrde.epita.fr.

Please cite the following papers in all publications that use EvaLTex:

|